Generative AI Image Creation Does Come With a Cost

OpenAI released Image 2.0 yesterday. The headlines were predictable: unprecedented fidelity, near-perfect text rendering, multi-panel generation, output that is hard to distinguish from human-made results. A restaurant menu generated by the model could be placed in front of customers without anyone noticing something was off. That is impressive. That is also the problem.

Let me tell you about the first time I got genuinely unsettled by AI-generated imagery. It was not some dramatic deepfake of a politician. It was a LinkedIn post -- a headshot of a "thought leader" sharing insights on nonprofit fundraising strategy. Clean background. Professional framing. Confident expression. Everything about it read as a real person with a real career. The account had thousands of followers. The content was being shared and cited. I spent twenty minutes trying to confirm whether this person existed before I gave up and moved on. That twenty minutes was the cost. And I never did find out.

Now scale that by several orders of magnitude and add photorealistic text rendering accurate enough to pass as a real document.

OpenAI was founded with a stated mission: ensure that artificial general intelligence benefits all of humanity. That framing appeared in the original charter, in public statements, in the reasoning given for why a safety-focused lab needed to exist at all. Whether or not you take that seriously as a governing principle, it was the justification offered for why this technology was being built in the first place.

How Does GenAI Image Creation Serve the Greater Good, Exactly?

Image 2.0 is not a safety product. It is a capability product. And the capability it delivers -- the ability to generate images at up to 2K resolution with accurate small text, iconography, UI elements, and dense compositions -- is precisely what bad actors need to make synthetic content indistinguishable from real content at scale.

The copyright picture is already complicated. AI models are trained on billions of images, many protected by copyright, scraped without the creators' consent. In the US, you cannot copyright a purely AI-generated image because copyright law only protects works created by humans -- which means anyone can copy and redistribute it without consequence. So the internet is about to absorb a massive volume of photorealistic synthetic imagery that carries no authorship, no accountability, and no legal protection against reuse. It is not a legal gray zone. It is a structured information void.

What this does to user experience and perception is not theoretical. Trust in visual content online was already degrading before Image 2.0. Platforms that depend on authentic imagery -- editorial, documentary, social impact, nonprofit communications -- are going to face an accelerating credibility problem as synthetic images become easier to produce and harder to identify. The people who will feel this first are not tech workers. They are the organizations trying to document real conditions, real communities, and real outcomes. When synthetic imagery is cheap and abundant and indistinguishable, authentic documentation becomes harder to believe.

There is also a simpler harm that does not get enough attention: the homogenization of visual culture. Every AI image generator has aesthetic tendencies baked into its training data. Image 2.0 can follow complex stylistic constraints at a level of specificity and fidelity that earlier models could not achieve -- which sounds like creative freedom but often produces a narrow band of polished, averaged, expectation-confirming output. When that output floods every website, every social feed, every marketing asset, the visual texture of the internet converges. Everything starts to look like everything else -- and that convergence is not neutral. It reflects whose work was scraped, whose aesthetics were averaged, and whose voice gets amplified at scale.

There is something worth saying plainly here: real art involves humans. Creativity is not a parameter setting. It is the product of experience, intention, struggle, and point of view -- things that cannot be automated because they are not processes. They are conditions of being alive. When we talk about generative AI image creation as a creative tool, we are borrowing language that does not quite fit. The tool generates. The human creates. Collapsing that distinction is not just semantically sloppy -- it gradually devalues the thing that makes human creative work worth anything in the first place. Every field that depends on visual storytelling -- journalism, documentary, advocacy, design, fine art -- has a stake in keeping that line visible.

None of this means generative image tools have no legitimate use. They do. But "has legitimate uses" is not the same as "benefits humanity." OpenAI's own framing invites a harder question than the one most coverage is asking. The question is not whether Image 2.0 is technically impressive. It clearly is. The question is what problem it is actually solving, for whom, and at what cost to the ecosystem that visual communication depends on.

The model's release notes mention reasoning capabilities, web search integration, and non-Latin script support. What they do not mention is what happens to the internet when generating a photorealistic, text-accurate, contextually grounded image takes a few minutes and costs fractions of a cent.

That question belongs in the release notes. It probably will not be.

This post is not a call to avoid the tools. It is a call to slow down before you reach for them. Have an honest conversation with yourself first -- about what you are actually trying to communicate, who you are communicating it to, and whether synthetic imagery serves that goal or quietly undermines it. Then have that conversation with your stakeholders and your clients. Because the consequences of misusing these tools are not abstract. They show up in eroded trust, in credibility gaps, in the slow degradation of what authentic visual communication even means anymore.

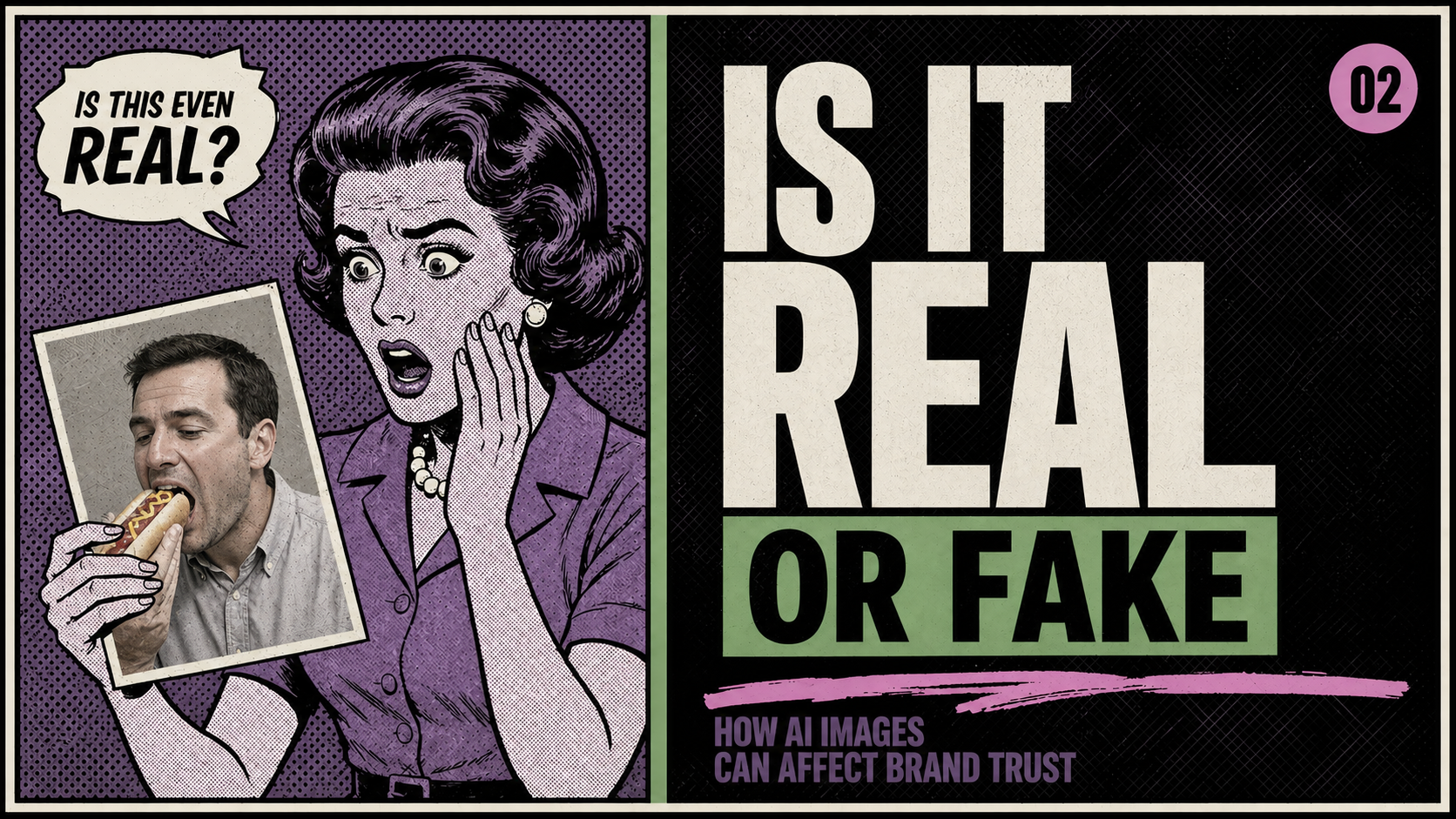

Image 2.0 is impressive technology. That is exactly why it deserves more than a quick prompt and a download. So, the next time you think about reaching for that generative AI image creation tool, please have a conversation with yourself first. Will your image cause others to ask this about your brand, "Is it real or fake?"